DOI: 10.1109/HRI.2013.6483611 | CITEULIKE: 12241905 | REFERENCE: BibTex, Endnote, RefMan | PDF ![]()

Zlotowski, J., & Bartneck, C. (2013). The inversion effect in HRI: are robots perceived more like humans or objects? Proceedings of the Proceedings of the 8th ACM/IEEE international conference on Human-robot interaction, Tokyo, Japan pp. 365-372.

The Inversion Effect in HRI: Are Robots Perceived More Like Humans or Objects?

HIT Lab NZ, University of Canterbury

PO Box 4800, 8410 Christchurch

New Zealand

jakub.zlotowski@pg.canterbury.ac.nz, christoph@bartneck.de

Abstract - The inversion effect describes a phenomenon in which certain types of images are harder to recognize when they are presented upside down compared to when they are shown upright. Images of human faces and bodies suffer from the inversion effect whereas images of objects do not. The effect may be caused by the configural processing of faces and body postures, which is dependent on the perception of spatial relations between different parts of the stimuli. We investigated if the inversion effect applies to images of robots in the hope of using it as a measurement tool for robot’s anthropomorphism. The results suggest that robots, similarly to humans, are subject to the inversion effect. Furthermore, there is a significant, but weak linear relationship between the recognition accuracy and perceived anthropomorphism. The small variance explained by the inversion effect renders this test inferior to the questionnaire based Godspeed Anthropomorphism Scale.

Keywords: human-robot interaction; inversion effect; anthropomorphism; methodology.

Anthropomorphism is one of the factors that can impact robots’ acceptance in natural human environments and physical spaces. Epley et al. [1] defined anthropomorphism as attribution of humanlike properties or characteristics to real or imagined nonhuman agents and objects. Previous studies under the Computers are Social Actors paradigm showed that even computers can be treated socially by people [2]. Due to their physical presence robots could benefit from this powerful human tendency [3].

The relationship between anthropomorphism and acceptance is rather complex. Foner [4] argued that interfaces based on strongly anthropomorphic paradigms in human-computer interaction lead to overly high expectations that cannot be met by the system. Moreover, Duffy [5] emphasized the importance of considerate design and use of robots’ anthropomorphism in order to form meaningful interactions between people and robots. He proposed that anthropomorphism should not be used as a solution to all HRI problems, but rather a means to facilitate an interaction when it is beneficial. Robots’ embodiment should be always designed in a way that matches their tasks [6]. Tondu and Bardou [7] proposed to use gestalt theory in order to choose an appropriate form of robots embodiment. On the philosophical level, Agassi [8] suggests that anthropomorphism is a form of parochialism that helps us to project our limited knowledge into the world that we do not understand. However, apart from embodiment, other types of anthropomorphic form exist [9].

In human-human interaction people form an impression of others within the first seconds to minutes time frame [10]. Moreover, the first impression can have lasting consequences in HRI as well [11, 12]. However, there are also studies that indicate early conceptions can change during the course of an interaction. After interacting with a robot people tended to anthropomorphize it more [13]. This change in user’s perception of a robot has been shown in infant-robot interaction [14]. Furthermore, people tend to judge a robot’s physical appearance and its capabilities in relation to its role [15].

Nevertheless, embodiment plays an important role in perception of anthropomorphism, especially in the phase before the actual interaction is initiated. Hence, it is not surprising that in the recent years extensive research has been conducted with an aim to better understanding the impact that embodiment has on people’s behavior whilst interacting with robots.

Fischer et al. [16] showed that physical embodiment and degrees of freedom affect HRI. The former has influence on how deeply a robot is perceived as an interaction partner, whilst the latter has an impact on how users project suitability of a robot for its current task. Moreover, a user’s personality can impact on preferences regarding a robot’s physical appearance [17]. Other factors, such as crowdedness can have influence on what type of a robot’s physical appearance will lead to a longer interaction [18]. Furthermore, Hegel and colleagues [19] found that the increase of humanlikeness of a robot’s embodiment leads to attribution of higher intelligence and different cortical activity.

Since the role of embodiment is rather complex and still not well understood, there is no doubt that it will remain one of the research focus areas in forthcoming years. Embodiment also emphasizes the importance of choosing an appropriate physical appearance for social robots that will enter human spaces. Therefore, it is necessary that robotic system designers are able to assess the level of anthropomorphism of various embodiments. Questionnaires are among the most popular measurement tools used in HRI research, e.g. [11]. However, there are some attempts to develop alternative methods. Kriz et al. [20] used robot-directed language as a tool for exploring people’s implicit beliefs toward robots. Moreover, Admoni and Scassellati [21] proposed that an understanding of mental models of robots’ intentionality can guide the design process of robots. In addition, Ethnomethodology and Conversation Analysis shed more light on our understanding of HRI [14].

Bae and Kim [22] took a different approach. They were interested to see whether visual cognition allots more attention to robots with animate or inanimate forms. In order to answer their question, they conducted a change detection experiment in which participants briefly saw two images involving a robot and were asked to decide whether the images were identical or not. The allocation of visual attention to the changes perceived is responsible for the change detection in the paradigm [23]. They found that the participants detected changes swifter in animate than inanimate robots.

This paper presents a similar approach to [22]. We used images of robots and explored their relation with visual cognition in an attempt to validate a new method for measuring robots anthropomorphism based on their physical appearance. However, compared with [22], we have utilized a different paradigm, known as the inversion effect.

A. Inversion effect

The inversion effect is a phenomenon when upside down objects are significantly more difficult to recognize than upright objects [24, 25]. It has been originally reported for the recognition of faces [24] and explained as a result of different processing used for different types of stimuli [25]. Configural processing, used for identification of human faces, involves the perception of spatial relations among the features of a face (e.g. eyes are always in certain configuration with a nose). On the other hand, during the recognition of objects, the spatial relations are not taken into account and this type of processing is called analytic [26].

While most of the objects are recognized by the presence or absence of individual parts, the recognition of faces involves configural processing, which requires specific spatial relations between face parts [27, 28, 29]. Furthermore, body postures produce similar inversion effects as what faces do [30] and therefore, a different processing mechanism is involved in the recognition of body postures compared to inanimate objects [26]. Moreover, configural body posture recognition requires whole body rather than merely body parts with the posture physically possible [26].

In addition, it has been shown that people who become experts in certain categorization of objects (e.g. a specific breed of dogs) can recognize them using configural processing and exhibit a similar handicap of performance due to the inversion effect as shown for faces [31]. Diamond and Carey [32] suggested that there are three conditions necessary for the inversion effect to occur:

- The members of the class must share configuration

- Distinctive configural relations among the elements must enable individuating members of the class

- Subjects must have expertise in order to exploit such features

In relation to robots, Hirai and Hiraki [33] conducted a study that investigated whether the appearance information of walking actions affects the inversion effect. The event-related potential (ERP) indicated that the inversion effect occurred only for animations of humans. Thus, a robotic walking animation was processed differently than a human body.

Recently studies suggested that under certain conditions the human body can be perceived analytically rather than configurally. Bernard et al. [34] showed that due to the objectification of sexualised women’s bodies, their recognition is not handicapped by the inversion effect. However, sexualised men’s bodies exhibit the inversion effect like non-sexualised body postures. The gender of participants did not play a role in this phenomenon. Gervais [35] found that women’s bodies were reduced to their sexual body parts and that lead to the perception of them on the cognitive level as objects.

These findings show that non-sexualized human body postures and faces are perceived differently than other objects. Furthermore, humans are able to objectify other human bodies. In this paper we explore whether they are also able to anthropomorphize robots’ embodiment, as robots can share certain physical characteristics of human bodies. Due to practical concerns, we have used images of robots rather than real robots on which we elaborate more in the Limitations and future work section. First, we tested whether robots elicit the inversion effect. If their recognition is handicapped in the upside down position compared to the upright, it would suggest that they are configurally processed and therefore are viewed more like humans. Alternatively, if there be no significant decrease of recognition, this would mean that they are seen as objects. Second, we attempted to validate the magnitude of the inversion effect as a method for measuring anthropomorphism. If there is a relationship between the handicap due to the inversion effect and a robot’s perceived anthropomorphism, it will be possible to estimate the humanlikeness of a robot’s embodiment by measuring the magnitude of the inversion effect. Such an assessment tool could support robotic system designers in their choice of different embodiments for a platform which provides additional information about the level of anthropomorphism.

Method

This experiment was conducted as a within-subjects design with two factors: image type (object vs robot vs human) and orientation (upright vs upside down). The recognition accuracy (whether an image is recognized correctly as same or different) and reaction time were measured for each pair of images as dependent variables. Furthermore, the Godspeed Anthropomorphism Scale was used for each image in order to measure its perceived level of anthropomorphism.A. Measurements

The whole experiment was programmed and conducted using E-Prime 2 Professional Edition. We have measured the correctness of participants’ responses and their reaction times to the millisecond precision level. To measure the perceived anthropomorphism of stimuli we have used a slightly modified version of the questionnaire based Godspeed Anthropomorphism Scale [ 11]. Since the original questionnaire included a subscale that cannot be measured with static stimuli (Moving rigidly - Moving elegantly), we removed it. The remaining 4 semantic differential scales were used in their original English version.B. Materials and apparatus

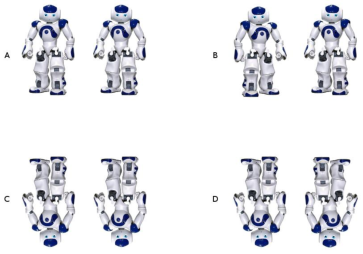

The robot stimuli were created in the following way. We selected pictures of real robots and on each picture there is only one robot. We opted for images depicting full bodies of robots rather than their faces as we wanted to include the full range of robots embodiments and merely due to having a face, a robot is anthropomorphic to some degree. Although the context provided by the background can play a role in perception of a robot’s anthropomorphism, it would be a confounding variable in the presented study to indicate which images were rotated. Therefore, following the procedure of previous experiments on the inversion effect, we coloured the background and shadows in the images completely white. Furthermore, if there were some letters or numbers present on a robot, they were removed as well as they could have provided additional hints for recognizing images. We have used a wide spectrum of existing, non-fictional and non-industrial robots, ranging from Roomba and Roboscooper to more humanlike ASIMO and Geminoids. There were in total 33 different robots that varied in shape and structure of their bodies (see Figure 1). To be able to measure the correctness of the response, we had to create distractors, so that the participants were able to choose between a correct and an incorrect response. Distractors were created by mirroring each robot, as proposed by [34]. This method was chosen as it ensured that the modification is comparable between different robots as well as different types of stimuli. The robots were centered on the image and they were in poses that are horizontally and vertically asymmetric. In other words, the right half of an image differed from the left, and the top half differed from the bottom. Therefore, they were distinguishable from their distractors. We have created pairs of images by putting together the original image with its exact copy and another pair that included the original image and its distractor (mirrored image). Therefore, there were 33 same-robot and 33 different-robot pairs. Finally, all robot pairs were rotated 180∘ to create the upside down robot stimuli. All possible combinations of image pairs (trials) can be seen in Figure 2.

Fig. 1. Sample of 6 images of robots with different embodiments used in the study.

Fig. 2. Image pairs used for a single image of a robot. A: Example of same trial in upright condition - original image paired with its copy. B: Example of different trial in upright condition - original image paired with its distractor. C: Example of same trial in upside down condition - original image rotated 180∘ paired with its copy. D: Example of different trial in upside down condition - original image rotated 180∘ paired with its distractor.

Exactly the same procedure was used in order to create human body and object stimuli. Thirty-three pictures of real people were included so that the number of pairs were the same as for the robots. Since these were real pictures, all body postures were natural and physically possible. We used images of full men and women bodies and they were presented in a non-sexual way (their bodies were covered by clothes) to ensure that sexual objectification will not affect the results [34]. The objects category included pictures of various types of home appliance, such as dishwasher, TV or telephone. The number of object pairs were exactly the same as for the two other categories of stimuli.

C. Procedure

Each participant was seated approximately 0.5 m from a 21.5” Macintosh computer monitor with Windows XP operating system. The resolution was set to 1920x1080 pixels. Each participant was allowed to adjust the height of the chair so that his eyes were at the same level as the center of the screen. Participants were informed that the experiment consists of 2 parts. In the first part, their task is to decide whether a pair of images was exactly identical. In the second, they have to fill in an anthropomorphism questionnaire.

Before the actual experiment began, participants had a practice round to familiarise with the procedure. It included in total 11 stimuli pairs of different types and orientation. The procedure to evaluate each stimulus in the practise round was identical to the procedure in the actual experiment for all other stimuli. Participants were shown the plus sign that indicated the fixation point in the center of the screen for 1000 ms, followed by the original stimulus for 250 ms and then a blank screen for 1000 ms. It was followed by a second stimulus that was either a copy or distractor of the first stimulus and remained on the screen until a participant responded. All images had 1024x768 pixels resolution. There was no mental rotation required as they were both displayed either in upright or upside down orientation. Participants pressed either the S key to indicate that the stimuli were absolutely identical or the L key if they were different. Participants were asked to respond as fast and as accurately as possible.

Upon completion of the practice round an actual experiment began, following exactly the same procedure as described above for the practice round. Stimuli were presented in 3 different blocks according to their type (object, robot, human), the order of blocks counterbalanced, and ordering of stimuli pairs randomized within each block across participants. Each of the blocks including 132 trials, which gave a total of 396 trials.

Upon completion of the first part, participants were asked to rate each stimulus used in the experiment on 4 subscales of the Godspeed questionnaire of anthropomorphism. All types of stimuli were included and their order randomized. The entire experiment took approximately 40 minutes.

D. Participants

Fifty-one subjects were recruited at the University of Canterbury. They were offered a $10 voucher for their participation. Due to software failure, the data of 4 participants was lost. Out of the remaining 47 participants, 15 were female. There were 23 postgraduate students, 16 undergraduate students, 4 university staff members and 4 participants whom did not qualify under any of these 3 categories. Their age ranged from 18 to 58 years with a mean age of 26.26 years. They were from 24 different countries, with New Zealand (15) and China (4) being the most represented. Forty participants never interacted with a robot or did it less than 10 times. Therefore, we regard them as non-experts in robotics. Only one participant indicated that he had over 100 interactions.Results

A. Perceived anthropomorphism

Since we slightly modified the Godspeed Anthropomorphism scale it was necessary to ensure that it is still reliable. The internal consistency of the anthropomorphism scale was very good, with a Cronbach’s alpha coefficient of 0.96. The reliability of the Godspeed questionnaire of anthropomorphism with the included 4 subscales is well above the acceptable 0.7 level [36] and therefore the removal of one subscale should not affect the anthropomorphism score.

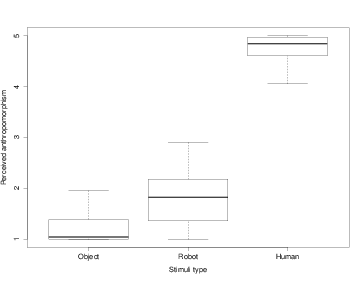

As the scale was regarded reliable, we have obtained the score of anthropomorphism for each image by calculating the mean of 4 subscales. Then, using these scores, we have calculated the score of anthropomorphism for each stimuli type (object, robot, person) by taking the mean of scores for all stimuli that belonged to that type. In order to establish whether a type of image affects its perceived anthropomorphism, we have conducted a one way repeated measures analysis of variance (ANOVA). We have applied the Huynh-Feldt correction, as Mauchly’s test indicated that the assumption of sphericity was violated (W=0.81, p=0.01). The analysis showed that there was significant effect for image type [F(1.76,80.74)=623.3, p<0.001, η2G=0.91]. We report here the generalized eta squared to indicate the effect size. It is superior to eta squared and partial eta squared in repeated measure designs, because of its comparability across studies with different designs [37]. Post-hoc comparisons using Bonferroni correction for the family wise error rate indicated perceived anthropomorphism was significantly different between groups at the level of p<0.001 (object, M=1.32, σ=0.51; robot, M=1.83, σ=0.51; person, M=4.67, σ=0.42) (see Figure 3).

Fig. 3. Perceived anthropomorphism. The level of anthropomorphism based on Godspeed questionnaire presented for each type of stimuli.

B. Inversion effect

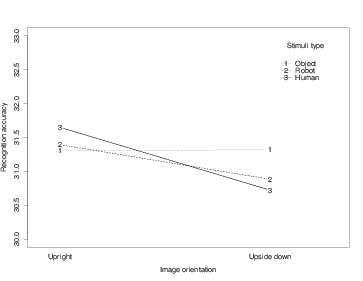

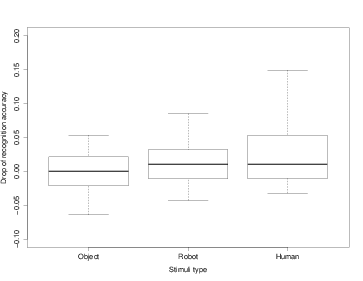

To test the hypothesis that robots, similarly to human body postures, produce an inversion effect, we have conducted a 2x3 repeated measures ANOVA with factors: orientation (upright vs upside down) and image type (object vs robot vs person). We have used the accuracy score (whether two images were correctly recognized as same or different) as the dependent variable. We have calculated a mean score of accuracy for each image type in both orientations to obtain six scores (theoretical range 0-33). Analysis showed that the main effect for orientation was statistically significant [F(1,46)=14.36, p<0.001, η2G=0.03]. More images were recognized correctly in the upright (M=31.45, σ=1.22) than upside down (M=30.98, σ=1.71) position. However, this main effect can be explained as a result of statistically significant interaction effect between orientation and image type [F(2,92)=4.97, p=0.01, η2G=0.02]. If robots elicit configural processing, then interaction effects should be significant for images of robots, but not for objects. Confirming this assumption, the interaction effect was found for people [F(1,46)=13.77, p<0.001, η2G=0.08]. Recognition accuracy decreased for upside down (M=30.72, σ=1.89) compared to upright (M=31.65, σ=1.19) images of people. Similar results were found for images of robots, where interaction effect was significant [F(1,46)=7.25, p=0.01, η2G=0.04]. Upright images of robots were recognized more accurately (M=31.39, σ=0.95) than upside down (M=30.88, σ=1.57) (see Figure 4). The interaction effect was not statistically significant for objects, neither any other interaction nor main effects were found.

Fig. 4. The interaction effects between type of stimuli and orientation. The drop of the recognition accuracy is visible when comparing upside down with upright orientation for human and robot stimuli. The inversion does not affect recognition of objects.

The same statistical analysis as for the recognition accuracy was applied for reaction times. A 2x3 repeated measures ANOVA was used to analyze data. All main effects and interactions were significant. The main effect of image type was statistically significant [F(2,92)=14.56, p<0.001, η2G=0.05]. Post-hoc comparisons using Bonferroni correction for family-wise error rate indicated mean reaction times between groups were significantly different from each other p<0.001 (object, M=879.88 ms, σ=62.97; robot, M=975.05 ms, σ=131.96; human, M=1072.23 ms, σ=146.04). Furthermore, reaction time was significantly longer [F(1,46)=12.33, p<0.001, η2G=0.01] for upside down (M=1004.41 ms, σ=158.1) compared to upright images (M=947.03 ms, σ=118.84).

C. Establishing validity of the inversion effect as a method for estimating anthropomorphism

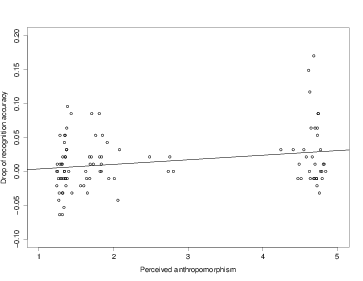

The above analysis indicated 3 image types differed in their perceived level of anthropomorphism. The robot and human body images elicited inversion effect. In the following step we tested relationship between change of recognition accuracy of images due to rotation and their perceived anthropomorphism. We calculated a percentage of correct response provided by all participants for each image in upright and upside down orientation. Then, we have subtracted the percentage of accuracy in the upside down orientation from the upright orientation for each image. The outcome is a measure of the handicap caused by the inversion effect of an image (Figure 5 ). Since the magnitude of the inversion effect is greatest for human body postures (which are also the most anthropomorphic) a positive linear relationship between anthropomorphism and the recognition accuracy should be found.

Fig. 5. Magnitude of inversion effect by stimuli type. Percentage difference of recognition accuracy between upright and upside down images grouped by image type.

Finally, we paired the perceived anthropomorphism score with the magnitude of inversion effect for each image. This data was plotted in order to determine a most suitable regression model to be used. Linear regression analysis was used to test if the perceived anthropomorphism predicted the handicap caused by the inversion effect. Results of regression indicated that the predictor gives explanation to 5% of the variance [adjusted R2=0.05, F(1,97)=6.28, p=0.01]. Perceived anthropomorphism was associated with magnitude of the inversion effect (β=0.007, p=0.01). The regression equation is: inversion handicap = -0.003 + 0.007 * perceived anthropomorphism (Figure 6).

Fig. 6. Relationship between anthropomorphism and a drop in recognition accuracy. This scatterplot presents relation between the score in Godspeed questionnaire and magnitude of a inversion effect for all stimuli with a regression line (α=-0.003, β=0.007).

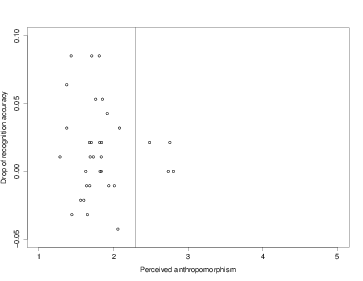

D. Grouping robots

After establishing that there is linear relationship between the perceived anthropomorphism of an image and decreased recognition accuracy in an upside down position, we were interested to see which robots could be grouped together and how many clusters exist. This was especially important since we used a wide spectrum of robot images, such as machine like (e.g. Roomba), humanoid (e.g. ASIMO) and androids. To accomplish this data from the previous subsection was used, however using only images of robots. Partitioning around medoids (PAM) algorithm [38] was used to determine clusters of robots. Plotting the data suggested that there are 2 clusters, further confirmed by optimum average silhouette width. The PAM algorithm with 2 clusters showed that in the first cluster there are the most humanlike robots: androids, while all the other robots created the second cluster (see Figure 7 ).

Fig. 7. Clusters of robots. Two clusters of robots created using the PAM algorithm based on their perceived anthropomorphism and magnitude of inversion effect. The right cluster includes only androids. All the remaining robots formed the other cluster.

To determine whether those two clusters significantly differ from each other, we analyzed whether a drop of recognition accuracy and perceived anthropomorphism for images included in the clusters were different. To analyze this difference in participants performance we used paired-samples t-tests. There was no statistically significant difference between two clusters of robots in the accuracy recognition drop caused by an inversion effect. However, a statistically significant difference in their perceived anthropomorphism [t(46)=8.3, p<0.001] exists. The cluster consisting of androids was perceived as more anthropomorphic (M=2.35, σ=0.67) than all the other robots (M=1.76, σ=0.27).

Finally, following the same procedure as described above, we have used PAM algorithm to create clusters based only on 1D data of robots’ perceived anthropomorphism. Results were the same as in the first clustering: we obtained 2 clusters of robots that included androids vs all the other robots.

Discussion

Results indicate that 3 types of stimuli significantly differed in their perceived level of anthropomorphism. As could have been expected, people were rated the highest and robots were perceived as more anthropomorphic than objects. It is noteworthy that the average anthropomorphism of robots is closer to objects rather than people.

This study investigated whether inversion effect could be used as an indicator for anthropomorphism of robots. The inversion effect is a phenomenon when an object’s recognition is worse in the upside down than upright orientation. It is a result of configural processing of an object in which spatial relations among parts are used to individuate it from other objects. It is unique for human faces and body postures (and certain objects with which people have expertise). We proposed to use it in HRI while exploring robots’ embodiment.

Our results confirm previous studies (e.g. [24, 30]) on the inversion effect - it was significant for people, but not for objects. Therefore, the recognition of human postures is significantly handicapped when they are in an upside down rather than upright orientation. The inversion effect also affected recognition accuracy of robots. In other words, on the cognitive level robots were processed more like humans than objects. The effect size of the inversion effect in our study needs to be considered as small based on the classification suggested by Bakeman [37] (0.02 - small effect, 0.13 - medium effect, 0.26 - large effect). Furthermore, it was smaller for robots than people, but the difference is still significant in both cases. Since the inversion effect is an indicator of the configural processing, it seems that in order to detect changes in robots’ embodiment, people perceive spatial relations among a robot’s parts rather than just a collection of parts, as is the case with objects. The important implication of this finding is that on the perception level, robots can be perceived differently than objects and potentially elicit more anthropomorphic expectations that can define early stages of an interaction.

These results are also slightly surprising as we have included a wide spectrum of robots. Some of them look like objects or merely have a few humanlike body parts, while others are imitations of real people. It is possible that the inversion effect was significant only for the most anthropomorphic robots, such as androids. However, as there were only 4 images out of 33 of these type of robots, it is improbable that they would bias drastically the result for all robots. In fact, the outcome of clustering separated androids from the other robots, but when we compared these 2 clusters on the magnitude of inversion effect, there was no significant difference. Therefore, the more plausible explanation is that some other types of robots are processed configurally as well.

Our results are inconsistent with the previous study which showed that the inversion effect was not present for a robotic walking animation [33]. We hypothesize that the difference in findings is due to the robotic stimuli used in the experiments. In our study we have used images of real robots. However, the other study involved an animated robot that was made of simple geometrical figures. It is possible that they were perceived as separate parts rather than a full robotic body.

It is also interesting to see that there is a discrepancy in the perceived anthropomorphism of robots between the self-report and cognition. The results of the Godspeed questionnaire indicate that people perceive robots’ anthropomorphism as closer to objects rather than human beings. However, the results of the inversion effect bring exactly opposite findings - robots were perceived more like humans. It is possible that the participants adapted their responses to a socially acceptable ones, e.g. they did not want to look like as if they perceive robots to be almost humanlike. Furthermore, since they were asked to rate images of people as well, they might have used them as the top extreme, unreachable for robots. However, if they were asked to rate only the robots, they might have rated some of them as more anthropomorphic since androids would become the upper extreme. In any case, this study indicates that the results of self-reports can be affected by various conditions and there is a need for cognitive measures that are not easily influenced.

The impact of the inversion effect on the recognition accuracy and reaction time indicates that the upside down compared with upright images were not only recognized worse, but also it took longer for participants to respond. It is probably an expected outcome since upside down images are harder to recognize. However, the analysis of the results also shows that the reaction time differed between different types of images. It took the shortest time to respond to images of objects, followed by robots and humans. It suggests that with the increased level of anthropomorphism it takes longer to recognize an image. Nevertheless, it is important to note that despite increased reaction time between these conditions, no difference was found for the recognition accuracy.

The analysis of the relationship between the inversion effect and perceived anthropomorphism indicates that there is significant linear relation. The higher the perceived anthropomorphism of a stimulus, the bigger is the handicap of the inversion effect. However, the model is able to explain only a small fraction of the variance (5%). It is an unsatisfactory result for suggesting the proposed method over existing tools for measuring the level of anthropomorphism of a robot’s embodiment. This conclusion is further supported by the results of clustering robots. While using the perceived anthropomorphism and the inversion effect for clustering indicated 2 clusters, exactly the same result could have been obtained including only the former scale. These 2 clusters did not significantly differ when we compared the drop of recognition accuracy from upright to upside down orientation. Therefore, the inversion effect was not significantly higher for the most anthropomorphic robots compared to the other types of robots. We conclude that the Godspeed Anthropomorphism Scale is a more appropriate method for measuring anthropomorphism as it permitted better discriminability between clusters of robots and the inversion effect explained only 5% of variance.

In this study we managed to develop and validate a new measurement tool of anthropomorphism that uses involuntary responses. The additional analysis suggests that this tool is inferior to existing measurement instruments. Nevertheless, equally important contribution of this paper is in showing that on the perception level the robots are processed more like humans than objects. The expertise with certain type of stimuli, often used to explain the inversion effect, cannot explain this finding, since the majority of our participants had no or very little experience with robots. Therefore, it is more sound to assume that robots have certain human characteristics that lead observers to similar cognitive processes as when recognizing other people.

Limitations and future work

In this experiment we have used images of robots rather then actual robots. We acknowledge that this decision could have introduced a bias on the obtained results. On the other hand, previous research on the inversion effect also tested images while generalizing the results to real-world people and objects as it is the only viable option. Therefore, we believe that our findings are applicable to the actual robots as well. There are numerous practical concerns that should be considered for this type of experiment. Definitely a financial constraint is an issue - our lab is unable to buy 33 different robots that could represent such a wide spectrum of robots. Moreover, it would be extremely difficult to present any type of physical stimuli with millisecond precision, and hanging real humans upside down is quite a challenging task.

We used 33 images of robots that varied in shape and structure of their bodies. Therefore, we are fairly confident that our results are generalizable for the non-industrial robots that are currently available. It is quiet possible that in years to come there will be robots with embodiments that differ from those used in this experiment and repeating the study will be required.

Our findings show that robots can be processed on the cognitive level as humans rather than objects. Consequences of this perception are especially relevant before actual HRI is initiated, as embodiment can affect users’ expectations regarding robots’ capabilities and willingness to initiate interaction. However, as previous research suggests, during the course of an interaction, the early conceptions regarding robots can be altered, e.g. [13, 15].

We found mirroring images in order to create distractors might not be an optimal method. We suggest that in future studies on inversion effect of robots, a more subtle modification can result in better discriminability of different types of stimuli.

Our study indicates at least some of the robots are processed configurally. However, it is possible that only certain types of robots are processed configurally, while others analytically. Future studies could investigate whether the inversion effect is unique for the most human-like robots, such as androids or whether it is common for all types of robots. The promising directions for future experiments, that can shed more light on this phenomenon, include exploring the inversion effect with industrial robots, the popular media robots and toys with anthropomorphic appearance. Analysis of differences between robots that evoke the inversion effect and those which do not, should help us to understand better what characteristics are required for a stimulus to be perceived configurally. Finally, although images of robots and the human body can be processed as configural stimuli, it is still possible that on the neural level, different processing streams are involved.

Acknowledgments

The authors would like to thank the anonymous reviewers for their insightful and valuable comments that helped to improve the quality of the paper.References

[1] N. Epley, A. Waytz, and J. T. Cacioppo, “On seeing human: A three-factor theory of anthropomorphism,” Psychological review, vol. 114, no. 4, pp. 864–886, 2007.

[2] B. Reeves and C. Nass, “The media equation,” 1996.

[3] C. Bartneck, T. Kanda, H. Ishiguro, and N. Hagita, “My robotic doppelganger - a critical look at the uncanny valley theory,” in 18th IEEE International Symposium on Robot and Human Interactive Communication, RO-MAN2009. IEEE, 2009, pp. 269–276.

[4] L. Foner, “Whats agency anyway? a sociological case study,” in Proceedings of the First International Conference on Autonomous Agents, 1997.

[5] B. R. Duffy, “Anthropomorphism and the social robot,” Robotics and Autonomous Systems, vol. 42, no. 3-4, pp. 177–190, 2003.

[6] J. Goetz, S. Kiesler, and A. Powers, “Matching robot appearance and behavior to tasks to improve human-robot cooperation,” in Proceedings. ROMAN 2003. The 12th IEEE International Workshop on Robot and Human Interactive Communication, 2003., oct.-2 nov. 2003, pp. 55–60.

[7] B. Tondu and N. Bardou, “Aesthetics and robotics: Which form to give to the human-like robot?” World Academy of Science, Engineering and Technology, vol. 58, pp. 650–657, 2009.

[8] J. Agassi, “Anthropomorphism and science,” Dictionary of the History of Ideas: Studies of Selected Pivotal Ideas, vol. 1, pp. 87–91, 1973.

[9] C. DiSalvo, F. Gemperle, and J. Forlizzi, Imitating the Human Form: Four Kinds of Anthropomorphic Form. Cognitive and Social Design of Assistive Robots. Available online: http://anthropomorphism.org, 2005.

[10] J. H. Berg and K. Piner, Personal relationships and social support. London: Sage, 1990, ch. Social relationships and the lack of social relationship, pp. 104–221.

[11] C. Bartneck, D. Kulič, E. Croft, and S. Zoghbi, “Measurement instruments for the anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety of robots,” International Journal of Social Robotics, vol. 1, no. 1, pp. 71–81, 2009.

[12] K. Pitsch, K. Lohan, K. Rohlfing, J. Saunders, C. Nehaniv, and B. Wrede, “Better be reactive at the beginning. implications of the first seconds of an encounter for the tutoring style in human-robot-interaction,” in 2012 IEEE RO-MAN, Sep. 2012, pp. 974–981.

[13] S. R. Fussell, S. Kiesler, L. D. Setlock, and V. Yew, “How people anthropomorphize robots,” in Proceedings of the 3rd ACM/IEEE international conference on Human robot interaction, ser. HRI ’08. New York, NY, USA: ACM, 2008, pp. 145–152.

[14] K. Pitsch and B. Koch, “How infants perceive the toy robot pleo. an exploratory case study on infant-robot-interaction,” in 2nd International Symposium on New Frontiers in Human-Robot Interaction - A Symposium at the AISB 2010 Convention, March 29, 2010 - April 1, 2010. The Society for the Study of Artificial Intelligence, 2010, pp. 80–87.

[15] L. Sussenbach, K. Pitsch, I. Berger, N. Riether, and F. Kummert, “”Can you answer questions, flobi?”: Interactionally defining a robot’s competence as a fitness instructor,” in 2012 IEEE RO-MAN, Sep. 2012, pp. 1121–1128.

[16] K. Fischer, K. S. Lohan, and K. Foth, “Levels of embodiment: Linguistic analyses of factors influencing hri,” in HRI’12 - Proceedings of the 7th Annual ACM/IEEE International Conference on Human-Robot Interaction, 2012, pp. 463–470.

[17] D. S. Syrdal, K. Dautenhahn, S. N. Woods, M. L. Walters, and K. L. Koay, “Looking good? appearance preferences and robot personality inferences at zero acquaintance,” in AAAI Spring Symposium - Technical Report, vol. SS-07-07, 2007, pp. 86–92.

[18] R. Gockley, J. Forlizzi, and R. Simmons, “Interactions with a moody robot,” in HRI 2006: Proceedings of the 2006 ACM Conference on Human-Robot Interaction, 2006, pp. 186–193.

[19] F. Hegel, S. Krach, T. Kircher, B. Wrede, and G. Sagerer, “Understanding social robots: A user study on anthropomorphism,” in Proceedings of the 17th IEEE International Symposium on Robot and Human Interactive Communication, RO-MAN, 2008, pp. 574–579.

[20] S. Kriz, G. Anderson, and J. G. Trafton, “Robot-directed speech: using language to assess first-time users’ conceptualizations of a robot,” in Proceedings of the 5th ACM/IEEE international conference on Human-robot interaction, ser. HRI ’10. Piscataway, NJ, USA: IEEE Press, 2010, pp. 267–274.

[21] H. Admoni and B. Scassellati, “A multi-category theory of intention.” in Proceedings of COGSCI 2012, Sapporo, Japan, 2012, pp. 1266–1271.

[22] J. Bae and M. Kim, “Selective visual attention occurred in change detection derived by animacy of robot’s appearance,” in Proceedings of the 2011 International Conference on Collaboration Technologies and Systems, CTS 2011, 2011, pp. 190–193.

[23] A. Hollingworth, G. Schrock, and J. M. Henderson, “Change detection in the flicker paradigm: The role of fixation position within the scene,” Memory and Cognition, vol. 29, no. 2, pp. 296–304, 2001.

[24] R. K. Yin, “Looking at upide-down faces,” Journal of experimental psychology, vol. 81, no. 1, pp. 141–145, 1969.

[25] D. Maurer, R. Le Grand, and C. J. Mondloch, “The many faces of configural processing,” Trends in cognitive sciences, vol. 6, no. 6, pp. 255–260, 2002.

[26] C. L. Reed, V. E. Stone, J. D. Grubb, and J. E. McGoldrick, “Turning configural processing upside down: Part and whole body postures,” Journal of Experimental Psychology: Human Perception and Performance, vol. 32, no. 1, pp. 73–87, 2006.

[27] S. Carey, “Becoming a face expert.” Philosophical transactions of the Royal Society of London.Series B: Biological sciences, vol. 335, no. 1273, pp. 95–102, 1992.

[28] S. M. Collishaw and G. J. Hole, “Featural and configurational processes in the recognition of faces of different familiarity,” Perception, vol. 29, no. 8, pp. 893–909, 2000.

[29] H. Leder and V. Bruce, “When inverted faces are recognized: The role of configural information in face recognition,” Quarterly Journal of Experimental Psychology Section A: Human Experimental Psychology, vol. 53, no. 2, pp. 513–536, 2000.

[30] C. L. Reed, V. E. Stone, S. Bozova, and J. Tanaka, “The body-inversion effect,” Psychological Science, vol. 14, no. 4, pp. 302–308, 2003.

[31] J. Tanaka and I. Gauthier, Expertise in Object and Face Recognition, ser. Psychology of Learning and Motivation - Advances in Research and Theory, 1997, vol. 36, no. C.

[32] R. Diamond and S. Carey, “Why faces are and are not special. an effect of expertise,” Journal of Experimental Psychology: General, vol. 115, no. 2, pp. 107–117, 1986.

[33] M. Hirai and K. Hiraki, “Differential neural responses to humans vs. robots: An event-related potential study,” Brain Research, vol. 1165, pp. 105–115, Aug. 2007.

[34] P. Bernard, S. J. Gervais, J. Allen, S. Campomizzi, and O. Klein, “Integrating sexual objectification with object versus person recognition: The sexualized-body-inversion hypothesis,” Psychological Science, vol. 23, no. 5, pp. 469–471, 2012.

[35] S. J. Gervais, T. K. Vescio, J. Frster, A. Maass, and C. Suitner, “Seeing women as objects: The sexual body part recognition bias,” European Journal of Social Psychology, 2012.

[36] J. Nunnally, Psychometric theory. Tata McGraw-Hill Education, 1967.

[37] R. Bakeman, “Recommended effect size statistics for repeated measures designs,” Behavior Research Methods, vol. 37, no. 3, pp. 379–384, 2005.

[38] L. Kaufman and P. J. Rousseeuw, Finding Groups in Data: An Introduction to Cluster Analysis, 99th ed. Wiley-Interscience, Mar. 1990.

This is a pre-print version | last updated April 5, 2013 | All Publications