DOI: 10.1109/TRO.2007.906263 | CITEULIKE: 1748721 | REFERENCE: BibTex, Endnote, RefMan | PDF ![]()

Kooijmans, T., Kanda, T., Bartneck, C., Ishiguro, H., & Hagita, N. (2007). Accelerating Robot Development through Integral Analysis of Human-Robot Interaction. IEEE Transactions on Robotics, 23(5), 1001 - 1012.

Accelerating Robot Development Through Integral Analysis of Human-Robot Interaction

1ATR Intelligent Robotics and Communication Laboratories

Kyoto 619-0288, Japan

kanda@atr.jp, hagita@atr.jp

2Department of Industrial Design

Eindhoven University of Technology

Den Dolech 2, 5600MB Eindhoven, NL

tijn@kooijmans.nu, christoph@bartneck.de

3Osaka University

Dep. of Adaptive Machine Systems

Osaka 565-0871, Japan

ishiguro@atr.jp

Abstract - The development of interactive robots is a complicated process, involving a plethora of psychological, technical, and contextual influences. To design a robot capable of operating “intelligently” in everyday situations, one needs a profound understanding of human-robot interaction (HRI). We propose an approach based on integral analysis of multimodal data to pursue this understanding and support interdisciplinary research and development in the field of robotics. To adopt this approach, a software tool named Interaction Debugger was developed that features user-friendly navigation, browsing, searching, viewing, and annotation of data; it enables fine-grained inspection of the HRI. In four case studies, we demonstrated how our analysis approach aids the development process of interactive robots.

Keywords: Data analysis, human-robot interaction (HRI), integrated approach, Interaction Debugger, multimodal data

Introduction

The growing interest in the field of robotics has increased the possibilities for robots to interact with people in natural ways. Humanoid robots, including Honda’s Asimo [1], Sony’s QRIO [2], and ATR’s Robovie [3], contribute to the shared vision of bringing robots into people’s everyday lives. Whether in the role of a household assistant, social partner, or babysitter, there will always be a need for robots to interact with people and their environments. Therefore, we believe the study of human-robot interaction (HRI) deserves a central role in the robot development process.

Developing interactive robots that will communicate with people in everyday situations is nowadays clearly considered an interdisciplinary process involving psychology, cognitive science, and engineering [4,5,6,7,8]. Engineers study the HRI to develop and improve robots, while psychologists aim for a better understanding of human attitudes, roles, and expectations toward robots. The process is inherently entangled, since on the one hand, engineers require behavior frameworks developed by psychologist to help them analyze the HRI. Psychologists, on the other hand, need to be aware of the technical limitations and possibilities when developing robot behavior and creating observation frameworks. The following scenarios briefly describe how they can use data analysis for such purposes.

First, humanoid robots generally incorporate large amounts of sensors and actuators that are controlled by their artificial brains. One major engineering challenge is to process such acquired sensor information so that the robot can perform an appropriate behavior in a certain situation. By studying sensor data triggered by a user’s action or environmental condition, engineers can design a robot to anticipate this information and produce an appropriate reaction.

Another scenario for studying the HRI has a more psychological-oriented motive. When people interact with a robot, one could analyze their attitudes and behavior toward it. A well-known means for conducting such a study is based on field trials. In [9,10,11,12,13], scenes of a field trial are analyzed and used for evaluation and improvement of robot behavior or design. In the long run, this could yield general knowledge about the HRI.

In both cases, data analysis is used for gaining better understanding of the HRI. Software originally developed for psychologists and linguists is available that aids this work with annotation and coding functionalities [14,15,16]. These, however, are limited to audio and video, decreasing their usefulness, especially for the first scenario. Other tools include body movements [17] or gazes [18] of humans and robots during interaction. However, the application domains of these examples are limited since only one type of data is available in addition to regular audio and video.

By integrating information from such modalities as sound, vision, object positioning, person identification, and body contact with audio and video, one can form a more complete overview of a situation, which leads to more effective analyses. We consider an analysis effective when it aids the development process of the robot or when it leads to increased knowledge about human attitudes toward robots.

Using this integrated approach one could, for instance, analyze which sensor values of a robot are triggered by certain human behavior, or if the internal states of a robot are activated in appropriate situations. From a psychological perspective, one could seek correlations between the distance from and behavior toward a robot or investigate such human attitudes as responses to body contact.

We developed a software tool named Interaction Debugger that helps people analyze multimodal data collected during experiments. This software enables engineers and psychologists to cooperate in the analysis of the HRI that may ultimately lead to the development of this field. Interaction Debugger promotes an integrative working process, which is necessary in the HRI field.

We previously reported a preliminary version of Interaction Debugger [19]. Currently, the software has reached a more mature level and can be applied as a full-fledged workbench to monitor, manage, and analyze experiments. New functionalities such as “data browser” and comprehensive search functions (explained in Section III) are key factors.

First, we will discuss an example setup that highlights the data types that can be included in Interaction Debugger. Next, we will describe the software’s architecture, its functionality, and its workflow. Then, we present several case studies that provide an informal evaluation of the software. Last, we discuss the contributions and limitations of the software before we end with our conclusion.

Example Setup

Before conducting an integral analysis, one first has to gather the necessary data during experiments or field trials. It is essential to consider which modalities and types of data are necessary to collect for later analysis, which depends on the focus of one's study. In this section, we describe an example setup for gathering data during field trials with interactive robots (see Fig. 1). In this setup, we studied the interaction between humans and Robovie and Robovie-M, which are interactive humanoid robots.

Fig. 1. Field trial with Robovie.

Robovie is a communication robot that autonomously interacts with people by speaking and gesturing [3]. Robovie-M is a small version of Robovie that can show autonomous behavior, but has no integrated sensing capabilities. Instead, we used networked sensors in the environment to feed information to the robot. The case studies in Section IV describe the goals of these experiments and the data analysis process using Interaction Debugger in detail. For our study, in addition to regular audio and video, the following modalities of data were collected: sound, vision, person identification, motion, body contact, distance, and robotic behavior. For each modality, we recorded several types of sensory information and/or intermediate variables, which were produced by the robot's software or external sensors. Intermediate variables can provide such information about the robot's state as its active behavior, battery level, or the words it speaks. In Section III-A, we clarify the technical details of data gathering and storage. Here is a complete overview of the data types used in our Robovie study.

- Sound: This contains sensor data of sound level meters in the robot environment as well as internal sensors. Two intermediate variables are related to sound: one indicates if the robot is in the talking mode and the other tells if the robot is in the listening mode.

- Vision: For the eye and omnidirectional cameras used inside the robot, a diff-value is calculated that provides information about the activity in the camera sight. The robot also has a face recognition mechanism that outputs a variable the size of a recognized face and another variable that indicates if the robot's sight is blocked.

- Person Identification: Throughout the field trials, we asked people to wear radio frequency identification tags (RFID). The robot as well as several places in its environment contains a RFID reader, whose software outputs tags that are in range. This gives information about the people in the robot environment and near it.

- Motion: This includes the motor positions of all the robot's joints.

- Body Contact: The only data type that belongs to this modality is touch sensor information, which indicates the state of each touch sensor: pressed or not.

- Distance: This contains information from distance sensors in the robot and range sensors in the environment. Both are used for detecting objects (including people) around the robot.

- Robotic Behavior: Robovie operates based on programmed behavior modules that are activated using rules. For example, when it detects a new person within a predefined range it activates the greeting behavior. There is also a possibility for "Wizard of Oz" control, which refers to manually activated behavior by someone remotely controlling Robovie. The activated behavior modules as well as the "Wizard of Oz" commands are recorded.

For audio and video, we used the following configuration.

- Audio data are captured by microphones connected to a robot or capturing personal computer (PC) stored in consecutive parts, typically 1 min in length to limit file size and to maintain a well-organized data collection. Using 1-min chunks also increases performance for later data retrieval.

- Video data are captured by cameras in the robot and the environment. Robovie has two eye cameras and one omnidirectional camera above its head. The capturing PCs can connect to multiple cameras. We used Intel's Indeo 4.5 compressor for captured video because it allows forward and reverse play at various speeds with audio, step-play with audio, and forward and reverse frame steps. Video parts were also recorded at lengths of 1 min.

Interaction Debugger

Interaction Debugger was developed to help people analyze data that were collected during experiments or field trials. We are aware that the software is an analysis tool. While it sounds unusual for psychologists to "debug" interaction, in the field of engineering, people's motivation for analysis is to debug their engineering products. Thus, we named this tool "Interaction Debugger" to entice engineers involved in the analysis of the HRI.

There are several requirements for a tool that aids an integral analysis process. We considered the following requirements when developing Interaction Debugger.

- It should be easy to see when data are available for specific types (e.g., video, audio, touch sensor data, etc.) and sources (e.g., robot, sensor PC 1, sensor PC 2, etc.). This is especially useful when experiments are conducted over multiple days or when one wants to compare data from multiple experiments.

- Data relevant for end-users should be easy to find, for example, quick retrieval of an overview of all events where people are around the robot.

- Easy time navigation: quickly jumping between days, hours, and minutes. Furthermore, there should be a simple time controller that supports playback, pausing, frame stepping, and forward and backward dragging.

- The data types should be visualized in a way that is understandable by the people involved in the analysis process.

- A user should be able to bookmark interesting events and freely make annotations about the data.

- Interaction Debugger should be extendible in a way that allows intermediate developers to easily add new data types.

In this section, we outline how Interaction Debugger was developed to meet these criteria from a technical point of view as well as an end-user point of view.

Technical Details

The software was developed using Java 1.5 to realize platform independence and to make it easily extendible (see Section III-C). We used Java Media Framework 2.1.1 to implement audio and video playback.

1) System Architecture:

The Interaction Debugger software can be decomposed into multiple layers and packages to make its structure understandable. The application itself consists of a user interface layer and a layer that contains utilities that provide the underlying functionalities for the user interface (see Fig. 2). Furthermore, we consider some operating system components on which the application runs.

Fig. 2. Logical structure of system architecture.

The application's user interface is a desktop environment that contains packages of tools, utility frames, search frames, and data frames. When discussing frames, we refer to windows inside Interaction Debugger's desktop. The data frames package consists of a series of frames that show data to an end-user from the available data types. These data frames serve the core functionality of the Interaction Debugger, displaying video, audio, sensor information, and intermediate variables. To look for interesting data, we designed several frames that provide search functions including annotations or robot behavior, filter-based searches, and a structured query language (SQL) query search for professional users. The search functions are further clarified in Section III-B. A collection of utility frames is also included, which consists of the following:

- a loading dialog that shows a progress bar when the Interaction Debugger is busy;

- a time controller for navigation and playback of data;

- a time browser to choose a day and time interval to analyze;

- a time information frame that communicates the time to a user while analyzing data.

The final package of the user interface is a set of tools including:

- frames for importing data from and exporting data to a text file;

- a data browser to view when data are available for specific types and sources;

- a frame that enables end-users to synchronize multiple data types. For example, if the audio and video are not exactly synchronized due to unsynchronized capturing systems, the user can set a delay for the faster one.

The utilities of the Interaction Debugger realize the following functionalities.

- The Update Data Thread updates all the data frames when needed. This is when a new time interval is loaded, when the time controller is dragged, or, every 10 ms. when the time controller is in playback mode.

- To maintain configurability in the Interaction Debugger, we use a settings framework, which includes functionalities to save and retrieve data from setting-files in XML format and generate a configuration panel to interface the settings to end-users. Settings include a database, file locations, table names, and settings to modify the Interaction Debugger's user interface. It is possible to create more then one settings file to serve multiple projects.

- The Data Frame Manager is the part of the Interaction Debugger responsible for the placement of data frames on the desktop. It remembers the size and position of

2) Controlled and Realtime Modes:

We defined two modes of operation for the Interaction Debugger that use different methods of data retrieval: controlled and realtime (see Fig. 3). Controlled mode is intended for detailed data analysis after an experiment or trial; realtime mode is especially useful for instant optimization or debugging of a robot's behavior. In controlled mode, data are retrieved from a database that contains all the data collected during an experiment or trial. For audio or video data, the actual contents are retrieved from a local or networked file system. Settings are provided in the Interaction Debugger to specify the file and database locations.

In realtime mode, the Interaction Debugger immediately presents data at the event time. In that case, a direct network connection with the capturing PCs and the robots facilitates data retrieval through a transmission control protocol (TCP)/Internet protocol (IP). Realtime audio and video streaming have not yet been implemented in the Interaction Debugger, but will be a valuable future improvement.

3) Data Gathering:

During experiments or field trials, we collected data from multiple sources: the robots and capturing PCs placed in their environment. Fig. 3 illustrates the data flow within this setup. Basically, all captured data are sent to a central place to be stored, which simplifies later data retrieval. In general, data consists of a timestamp and a set of values; the format depends on the type of data. For example, the data format for a sound level meter is a value between 0 and 120 dB. For audio or video, the actual media contents are stored in the file system, and only a filename is stored in the database for reference.

Sensor and intermediate variable data were recorded at intervals of 20-200 ms, depending on the need for precision in analysis. A shorter interval means higher precision, but it also requires more processing capacity as well as more network bandwidth. For audio and video, a value is only sent to the database every 60 s when a new part is recorded.

Time is an important index when retrieving data later to incorporate them from multiple sources. For this reason, we use the network time protocol (NTP) on all the systems that collect data to synchronize clocks with an accuracy of 10 ms. If in some cases a specific data type is still not synchronized, end-users can manually adjust its timing using the data synchronizer tool in the Interaction Debugger.

End-User Functionalities

In this section, we elaborate how the Interaction Debugger's main functionalities are made available to end-users. We divide them into navigation, browsing, searching, analyzing, and annotating and describe each in detail. The functionalities apply to the controlled mode of the Interaction Debugger since the realtime mode only aims to show data.

1) Browsing:

Whenever someone wants to analyze data, first he/she needs to know when data are available. The Interaction Debugger incorporates a browser (see Fig. 4) that gives an overview of the availability of all data types relative to time. The initial view provides an overview based on day precision, from where the user can zoom to specific days or even hours. Data types can be sorted either by modality or source. Furthermore, one can filter out certain modalities or sources to browse more purposefully.

2) Searching:

During the analysis process, one is often interested in searching for situations in which useful/interesting data are available. In the HRI, for example, this could be a situation in which there are people around the robot or a robot performs certain behavior. The Interaction Debugger provides several data search methods: annotation search, behavior search, filter search, and query-based search.

- Annotations can be regarded as bookmarks with descriptions made by a user when commenting on a certain event. The annotation search function gives an overview of all the bookmarks and makes it possible to show them in a specified time interval. Refer to Section III-B5 for more details on annotations.

- The next search function is based on robot behaviors. A user can select a specific behavior and a time interval for which all the events in which the specified behavior occurs are shown. The behavior search function requires that the robot store active behavior states.

- The most powerful search function in the Interaction Debugger is filter-based searching (see Fig. 5). When this search function is activated, every data frame obtains a bar at the bottom in which a user can select filters for the data presented in the frame. In this way, a user can combine filters for multiple data types to perform a search operation.

- Professional users experienced with SQL scripting sometimes feel comfortable manually creating search queries. For them, we have implemented a search function that helps compose SQL queries for available data types.

Fig. 5. Filter-based search function.

3) Navigation:

Navigating data in the controlled mode is based on the selection of a time interval, which can vary from 1 min to multiple days, and depends on end-user preferences and computer performance limitations. The user interface includes a time selection panel where one can manually choose a specific date as well as start and end times (Fig. 6.1). After selecting an interval, users can navigate through the data by a time controller that supports regular playback, frame-by-frame skipping, and mouse dragging. While controlling the time, an information window communicates the current interval and time.

Fig. 6. User Interface of Interaction Debugger.

4) Analyzing:

A core functionality of the Interaction Debugger is presenting data comprehensibly using visualizations tailored to the type of data. When using the software, one can open windows for every data type. All data types are accessible from the menu bar of the Interaction Debugger's main window, where they are sorted based on their modality. Fig. 6 shows a number of example windows that can be loaded:

- Figs 6.2 and 6.3: Video and audio windows that can be simultaneously loaded from multiple sources (see Fig. 7).

- Fig. 6.8: Visualizations of the robot's touch and motion sensors. The former blinks when the robot is touched. The latter are visualized by a 3-D model of the robot that resembles its motion. Other available windows for robot sensor data present data from ultrasonic distance sensors (see Fig. 8) and RFID tag readers.

- Fig. 6.9: Active behavior states of the robot, which are one of its intermediate variables. The result of each behavior state is displayed, indicating success or failure.

- Fig. 6.10: Environment sound level and a list of people in the robot's environment. The latter is based on RFID tag readings. Another available window for environment sensor data presents data from distance sensors (see Fig. 11, Step 1).

Our implementation of the Interaction Debugger incorporates data presentation windows optimized for Robovie. To increase comprehensibility, we present the robot's sensor data on graphical representations of Robovie, as demonstrated in Fig. 8. The Interaction Debugger also features standard presentation styles including tables and line charts. For such textual data as behavior states and RFID tag readings, we use tables (Figs. 6.9 and 6.10). For single sensor values as sound levels, we use line charts.

Fig. 7. Video data from multiple camera sources: Environment, robot eye, and omnidirectional cameras.

Fig. 8. Graphical representations of robot sensors.

5) Annotation:

To aid the analysis of the HRIs, an annotation window (Fig. 6.4) has been incorporated in the Interaction Debugger. Inspired by existing audio and video annotation software [14,15,16], this feature allows users to describe every frame of the data collection. For example, researchers could use this functionality to make detailed descriptions of human behavior during empirical studies. Moreover, it can function as a useful bookmark and search method, as explained before.

Extendibility

Since the Interaction Debugger might be used for different types of robots in other situations, it has been designed with a modular software architecture. The graphical presentation of data and their underlying management have been clearly separated, making it easy for intermediate developers to modify or design new presentation styles. Moreover, it enables easy implementation of new data types by extending the AbstractDataFrame class. Fig. 9 shows a unified modeling language (UML) model that describes the structure of an AbstractDataFrame object. It has a DataSource object that manages the retrieval of data from a database in the controlled mode or a TCP/IP socket in the realtime mode. It also contains a DataType object that, in turn, contains information about the data frame: name, modality, source name, and database table. Finally, the AbstractDataFrame object has a set of filter objects that are used for the filter-based search function.

Fig. 9. UML diagram of extendible data frame.

Case Studies

Integral analysis of the HRI can be used at several stages in the development process of interactive robots. To demonstrate how, we consider case studies related to the development of an interactive humanoid robot, Robovie, and its smaller variant, Robovie-M. For each case study, a step-by-step description will clarify how the Interaction Debugger was employed for data analysis.

Development of a Sensor Network

A technique for realizing intelligent behavior with a robot that is being used more and more is implementing a sensor network around a robot. In this way, the robot can extend its sensing capabilities beyond its own body and use sensors in the environment to analyze people. This case study is related to the development of a sensor network in an environment where Robovie had to interact with many people standing around it. The goal was to make communication more natural between Robovie and the people. One element was looking in the direction of people while talking with them. Moreover, estimating people's behavior would be helpful to make the robot interact with them accordingly.

We decided to use pressure sensors in the floor around Robovie to realize the localization and behavior estimation of people. After installing these sensors, the challenge was to develop an algorithm capable of analyzing sensor contacts created by the crowds and to discriminate people from this. The next step would then be to make it detect walking patterns and map such behaviors as "approaching," "conversing," "passing by," etc.

We developed this algorithm by collecting data from people who interacted with Robovie on a floor that contains pressure sensors and then using the integral analysis approach to find patterns. The types of data collected included video, floor sensor data, and behavior states of the robot. The chosen environment was an office building in which the robot interacted with groups of visitors. After the experiment, a developer analyzed the data using the Interaction Debugger to find patterns in the floor sensor data (see Fig. 10) by comparing the video in which he observed the people moving on the floor with the floor sensors. He also considered the behavior states of the robot to find correlations with human behavior, i.e., how they reacted to it. This could be helpful for a behavior estimation algorithm.

Optimizing Thresholds for a Robot

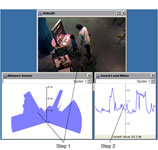

During a field trial, a number of robots were placed in the Osaka Science Museum to interact with people. This particular setting was part of our previous research activities [20]. Since Robovie-M was programmed to explain exhibits to visitors for this trial (see Fig. 11), it had to detect the presence of people and proactively draw their attention. Because Robovie-M has no integrated sensing capability, several sensors were placed around it to enable presence detection. For example, an infrared sensor was placed under the robot to measure the distance of objects in the environment, and a sound level meter distinguished background noise from human speech. To use these sensors for presence detection, they are read by Robovie-M's control software and interpreted based on thresholds. Because every environmental situation is different, these thresholds have to be set manually.

The realtime mode of the Interaction Debugger was employed in this situation to optimize the presence detection thresholds. The robot developer used the following method (see Fig. 11):

- Step 1: Using visualization of the infrared sensor, for each occasion he analyzed the distance at which people approached the robot and the angles at which they started to interact with it. He used this information to set the corresponding thresholds.

- Step 2: Sound level meter visualization was analyzed to set the voice detection threshold of the robot. In the picture, peaks represent human speech.

- Using the Interaction Debugger, the developer successfully optimized the presence detection mechanism in a relatively short time span.

Evaluation of Hugging Behavior

During a field trial at a Japanese elementary school, Robovie was positioned in a classroom for 18 days to study the social interaction and the establishment of relationships between pupils and the robot. This particular setting was also used for our previous research activities [10].

Robovie is designed to sometimes exhibit hugging behavior during interaction with people if they keep reacting to it. However, hugging did not always appear successful. In this experiment, a hug was considered successful if the robot closed its arms around the user when he/she stepped toward it with open arms.

A robot developer used the Interaction Debugger to analyze the data recorded during 3 days of trials to debug the hugging behavior of the robot. His method can be summarized as follows (see Fig. 12):

- In the behavior-based situation loader, the user selected hug behavior to retrieve a list of all hugging events. By double clicking on an entry, the corresponding time is automatically loaded. Data windows were loaded using the menu.

- For each event, he analyzed the robot's touch sensors and the distance sensor conditions that activated hugging behavior. A highlighted touch sensor shows that it was pressed; when an object is near the robot, the blue lines of the ultrasonic sensor display are interrupted. The Interaction Debugger's time controller enabled him to study the data frame-by-frame.

- For each event, he also checked the success of the hugging behavior by reading the result values of the behavior state. Robovie outputs results for every finished behavior state, which are displayed in the table. For hugging, a zero value means that the behavior was interrupted or was not finished successfully.

- Finally, for each event, he annotated the success of the hug and the corresponding sensor conditions. Twenty percent of the hugs were not successful. The ultrasonic sensor window revealed the robot's failure to detect objects in front it during all unsuccessful hugs. This instability of the ultrasonic sensors indicates the cause of the problem. With this information, the developer debugged the robot and improved its hugging behavior. Although this is a simple case of debugging, we consider it effective analysis.

Studying Human Behavior in an Open Field

For the same field trial at the Osaka Science Museum as in Case Study A, a researcher with a background in psychology carried out an empirical study on the behavior of people who interacted with Robovie-M to learn how the crowd around a robot influences the way people react to it. This knowledge might eventually be useful to improve the way a robot initiates interaction with people in different crowds.

For analyzing human behavior, he adopted an observation technique established in psychology based on analyzing data by annotations using a code protocol. In this case, he considered every event where someone interacted with the robot and coded human actions following a set of parameters that included such personal information as adult/child, alone/group, and gender, and information about the interaction such as type and cause of behavior, distance from robot, and crowdedness. A comparable example of such a coding system is the Facial Action Coding System (FACS) developed by Ekman et al. [21]. Today, FACS is widely used for facial emotion recognition. Coding data by hand enables the analyst to use an exploratory approach and simultaneously get quantifiable results.

The factors that played an important role were the positions of people and the environmental sound level. Both provide information about crowdedness. He used the Interaction Debugger as a tool to analyze how these factors influenced human behavior toward the robot by the following method (see Fig. 13):

- First he found events where people approached the robot by rapidly skipping through the scenes by moving the timeline slider. Whenever noticing people approaching the robot, he used the play function to scrutinize the interaction event.

- For each interaction event, he coded its start and end times, the person's ID (e.g., B156), adult/child, alone/group, and gender in the annotation window.

- When analyzing the video, he checked every human action for behavior that matched one of the predefined behaviors considered interesting (e.g., moving, speaking, imitation, waving, bending, touching, etc.). To consider the human behavior in detail, he viewed the data frame-by-frame using the time controller.

- To analyze the distance and position of people from the robot, he observed the distance sensor window while navigating frame-by-frame. For every human action, he measured the distance between humans and the robot.

- He analyzed the sound level of the robot's environment by checking the decibel value in the sound level meter window. For every human action, he calculated the average sound level as an indicator of crowdedness.

- To analyze the cause of human behavior, he checked the type of behavior the robot performed in the 'Wizard of Oz' command window, which shows the active behavior states for Robovie-M.

- For each interaction event, he recorded every human action and the results from Steps 2-4 as coded annotation.

- After using the Interaction Debugger, he used spreadsheet software to interpret the codes and compute the statistical results. Example computations include the interaction time of people relative to distance from the robot or interaction time relative to crowdedness.

His analysis revealed that people showed different patterns of approaching the robot in different crowd situations. This knowledge could be used later so that the robot can automatically infer that people are interested in it by measuring crowdedness and their movements. The results of this study were published by Nabe et al. [22].

Discussion

Contributions

This paper presented a multimodal approach to analyze the HRI, which we believe is an essential part in the development process of interactive robots. By adopting this approach, robot developers can efficiently improve robot interactivity. Improving hugging behavior is a simple example, but more complex situations in which an integral approach could help are easily imaginable: for instance, the evaluation of speech recognition by analyzing audio data, background noise level, and intermediate variables that indicate recognized speech.

For psychologists, the interaction debugging approach is useful to aid qualitative data analysis techniques, such as the observation method, which is often adopted in human behavior analysis. Another case in which interaction debugging could have been useful was the development of a human friendship estimation model for communication robots [23]. In this research, interhuman interaction was analyzed in the presence of a humanoid robot.

Psychologists can evaluate human responses to robotic behavior by studying the HRI, which can help robot developers adjust the robot and optimize its behavior. We believe such an interdisciplinary approach is essential for improving the HRI.

Evaluation of the Approach

Unlike the evaluation of a method, evaluating a new methodology is difficult. Since no related methodology was available for comparison, we did not conduct a controlled experiment to evaluate the interaction debugging approach as a whole.

The interaction debugging approach can be decomposed into the following methods: "showing sensory information" and the "integration of multimodal information." To evaluate the effectiveness of the first method, for instance, we could conduct a controlled experiment that compares analysis results with/without sensory information or intermediate variables. However, as shown in the case studies, we often cannot accomplish the analysis goal without this information.

To test the integration of the multimodal information, we compared the use of the Interaction Debugger with a common audio/video controller and specialized software for displaying sensory information. However, a tool that integrates these components is obviously more effective.

We feel that individual evaluation of both methods does not lead to a clear impression about the validity of the interaction debugging approach as a whole. Therefore, instead of conducting such experiments, in this paper we focused on the introduction of our integral approach by presenting case studies.

Evaluation of Software Usability

Since the Interaction Debugger is intended for people from different disciplines who do not have the same experience with the technical aspects of robotics, we consider usability a key evaluation point. Based on the usability goals specified by Preece et al. [24], "time to learn" and "retention over time" were selected as the main criteria for optimizing the user interface.

Within the process of optimizing the usability of the Interaction Debugger, the first method employed was expert reviewing, which is commonly used in software development to evaluate a user interface by determining conformance with a short list of design heuristics. We used Shneiderman's "eight golden rules of interface design" [25]. Furthermore, the case studies were part of a user-centered method to optimize the Interaction Debugger's user interface [26]. For each case study, user interaction with the software was studied, and feedback was requested to generate usability improvements.

From our observations of people who used the Interaction Debugger, we drew some conclusions that illustrate its current usability performance. The simple structure of its user interface made it easy for people to start working with it. If experienced with window-based graphical user interfaces (GUIs), new users only needed a brief explanation of the different functions to get started. For non-novice users, the software provides enough shortcuts to efficiently control the user interface. Examples include mouse scrolling to control time and key combinations for adding annotations.

A design problem worth mentioning concerns the organization of windows in the Interaction Debugger. Because the number of windows can become large for certain analysis tasks, having a friendly way of positioning them on the screen is helpful. We decided to let the user manage the organization, which means that the software remembers the last position of a window. This enables users to personalize the software to create a comfortable working environment.

Evaluation of Software Performance

The Java language used for developing the Interaction Debugger software is sometimes considered to lack efficiency for high-performance applications. We managed to overcome this limitation by using several techniques such as preprocessing of data and proper reusing of objects to prevent excessive garbage collection.

When loading a time interval as explained in Section III-B3, the Interaction Debugger preprocesses the data to optimize navigation performance. We use an efficient dichotomy search algorithm to quickly locate the correct data when moving the playhead. As an example, when we open two videos, one audio, one ultrasonic sensor, one behavior-module, and one motion panel, for a 5-min data duration, memory consumption is approximately 35 MB. Everything worked in realtime when we run this configuration on a computer with 512-MB memory with an ordinary Pentium IV CPU.

Limitations

In this paper, we only demonstrated four case studies of the interaction debugging approach. All cases were related to independent projects and were not part of any large-scale engineering process because the software has been prepared very recently. Hence, the applicability and effectiveness for large-scale development remains unclear.

At the moment, the generalizability of our approach is also still unknown. Since it was only tested in a limited number of applications, we cannot determine in which cases the approach will be applicable and effective and in which cases it will not be. We believe that using an interaction debugging approach for more widespread robot development activities will foster a better view on this. We would, thus, like to encourage other robot developers to adopt this method and contribute to this field.

Another limitation of the current status of development is related to the modalities required for integral analysis. Currently, no guidelines have been developed that give such indications. In our examples, we used robot sensors, environment sensors, and intermediate variables. However, for certain purposes, one might not need all these data.

Conclusion

We believe that an integral approach to analyze the HRI, which involves multiple modalities, has been inevitable since the complexity of the interaction between robots and humans in everyday situations has increased dramatically. We developed the Interaction Debugger software to aid the interaction data analysis. This tool allows us to conduct interdisciplinary projects that investigate the HRI by offering an environment that encourages collaboration between robot developers and psychologists. We demonstrated the practical applicability of the interaction debugging approach by addressing four different case studies that demonstrated the necessity of observing different modalities. In all of them, using the Interaction Debugger led to an effective analysis of the HRI. However, we are aware that this only illustrates a limited number of applications and does not compare its effectiveness to other methodologies. The Interaction Debugger's functionality exceeds any of the conventional video data analysis tools and, hence, a comparison could not provide valid insights. Instead, we hope to inspire researchers to adopt comparable methods to move toward a more quantitative and, hence, scientific approach to the HRI.

Acknowledgement

The authors thank S. Nabe, Y. Koide, and J. Lin for their valuable contributions toward the development and optimization of the Interaction Debugger.

References

- Sakagami, Y., Watanabe, R., Aoyama, C., Matsunaga, S., Higaki, N., & Fujimura, K. (2002). The intelligent ASIMO: system overview and integration, pp 2478-2483 vol.2473. | DOI: 10.1109/IRDS.2002.1041641

- Fujita, M. (2001). AIBO: Toward the Era of Digital Creatures. The International Journal of Robotics Research, 20(10), 781-794. | DOI: 10.1177/02783640122068092

- Ishiguro, H., Ono, T., Imai, M., Maeda, T., Kanda, T., & Nakatsu, R. (2001). Robovie: an interactive humanoid robot. International Journal of Industrial Robot, 28(6), 498-503. | DOI: 10.1108/01439910110410051

- Dautenhahn, K., Walters, M., Woods, S., Koay, K. L., Nehaniv, C. L., Sisbot, A., et al. (2006). How may I serve you?: a robot companion approaching a seated person in a helping context. Proceedings of the Proceeding of the 1st ACM SIGCHI/SIGART conference on Human-robot interaction, Salt Lake City, Utah, USA, pp 172-179. | DOI: 10.1145/1121241.1121272

- Gockley, R., Forlizzi, J., & Simmons, R. (2006). Interactions with a moody robot. Proceedings of the Proceeding of the 1st ACM SIGCHI/SIGART conference on Human-robot interaction, Salt Lake City, Utah, USA, pp 186-193. | DOI: 10.1145/1121241.1121274

- Breazeal, C. (2004). Social interactions in HRI: the robot view. Systems, Man and Cybernetics, Part C: Applications and Reviews, IEEE Transactions on, 34(2), 181-186. | DOI: 10.1109/TSMCC.2004.826268

- Steinfeld, A., Fong, T., Kaber, D., Lewis, M., Scholtz, J., Schultz, A., et al. (2006). Common metrics for human-robot interaction. Proceedings of the Proceeding of the 1st ACM SIGCHI/SIGART conference on Human-robot interaction, Salt Lake City, Utah, USA, pp 33-40. | DOI: 10.1145/1121241.1121249

- Ishiguro, H., Ono, T., Imai, M., & Kanda, T. (2003). Development of an Interactive Humanoid Robot "Robovie" - An interdisciplinary approach. In Robotics Research: The Tenth International Symposium (Vol. 6/2003, pp. 179-192). Berlin: Springer.

- Dautenhahn, K., & Werry, I. (2002). A quantitative technique for analysing robot-human interactions, pp 1132-1138 vol.1132. | DOI: 10.1109/IRDS.2002.1043883

- Kanda, T., Hirano, T., Eaton, D., & Ishiguro, H. (2004). Interactive Robots as Social Partners and Peer Tutors for Children: A Field Trial. Human Computer Interaction (Special issues on human-robot interaction), 19(1-2), 61-84. | DOI: 10.1207/s15327051hci1901&2_4

- Siegwart, R., Arras, K. O., Bouabdallah, S., Burnier, D., Froidevaux, G., Greppin, X., et al. (2003). Robox at Expo.02: A large-scale installation of personal robots. Robotics and Autonomous Systems, 42(3-4), 203-222. | DOI: 10.1016/S0921-8890(02)00376-7

- Tanaka, F., Movellan, J. R., Fortenberry, B., & Aisaka, K. (2006). Daily HRI evaluation at a classroom environment: reports from dance interaction experiments. Proceedings of the Proceeding of the 1st ACM SIGCHI/SIGART conference on Human-robot interaction, Salt Lake City, Utah, USA, pp 3-9. | DOI: 10.1145/1121241.1121245

- Burke, J., Murphy, R., Riddle, D., & Fincannon, T. (2004). Task Performance Metrics in Human-Robot Interaction: Taking a Systems Approach. Proceedings of the Performance Metrics for Intelligent Systems Workshop, Gaithersburg, MD.

- Noldus, L., Trienes, R. J. H., Hendriksen, A. H. M., Jansen, H., & Jansen, R. G. (2000). The Observer Video-Pro: new software for the collection, management, and presentation of time-structured data from videotapes and digital media files. Behavior Research Methods, Instruments, & Computers, 32(1), 197-206.

- Quek, F., Shi, Y., Kirbas, C., & Wu, S. (2002). VisSTA: A Tool for Analyzing Multimodal Discourse Data. Proceedings of the Seventh International Conference on Spoken Language Processing, Denver.

- Kipp, M., & Anvil, A. (2001). Generic Annotation Tool for Multimodal Dialogue. Proceedings of the 7th European Conference on Speech Communication and Technology (Eurospeech), Aalborg, pp 1367-1370.

- Kanda, T., Ishiguro, H., Imai, M., & Ono, T. (2004). Development and evaluation of interactive humanoid robots. Proceedings of the IEEE, 92(11), 1839-1850. | DOI: 10.1109/JPROC.2004.835359

- Sumi, Y., Ito, S., Matsuguchi, T., Fels, S., & Mase, K. (2004). Collaborative Capturing and Interpretation of Interactions. Proceedings of the Pervasive 2004 Workshop on Memory and Sharing of Experiences, Linz / Vienna, Austria, pp 1-7.

- Kooijmans, T., Kanda, T., Bartneck, C., Ishiguro, H., & Hagita, N. (2006). Interaction Debugging: an Integral Approach to Analyze Human-Robot Interaction. Proceedings of the 1st Annual Conference on Human-Robot Interaction (HRI2006), Salt Lake City, USA, pp 64-71. | DOI: 10.1145/1121241.1121254 | DOWNLOAD

- Nomura, T., Tasaki, T., Kanda, T., Shiomi, M., Ishiguro, H., & Hagita, N. (2005). Questionnaire-Based Research on Opinions of Visitors for Communication Robots at an Exhibition in Japan. Proceedings of the International Conference on Human-Computer Interaction (Interact 2005), Rome, pp 685-698. | DOI: 10.1007/11555261_55

- Ekman, P., & Friensen, W. V. (1978). Manual for the Facial Action Coding System and Action Unit Photographs: Consulting Psychologists Press.

- Nabe, S., Kanda, T., Hiraki, K., Ishiguro, H., Kogure, K., & Hagita, N. (2006). Analysis of human behavior to a communication robot in an open field. Proceedings of the 1st ACM SIGCHI/SIGART conference on Human-robot interaction, Salt Lake City, Utah, USA, pp 234-241. | DOI: 10.1145/1121241.1121282

- Nabe, S., Kanda, T., Hiraki, K., Ishiguro, H., & Hagita, N. (2005). Human friendship estimation model for communication robots, pp 196-201. | DOI: 10.1109/ICHR.2005.1573567

- Preece, J., Rogers, Y., & Sharp, H. (2002). Interaction Design: Beyond Human-Computer Interaction. New York: John Wiley & Sons Inc. | view at Amazon.com

- Shneiderman, B. (1998). Designing the user interface : strategies for effective human-computer-interaction (3rd ed.). Reading, Mass: Addison Wesley Longman. | view at Amazon.com

- Norman, D. A., & Draper, S. W. (1986). User centered system design : new perspectives on human-computer interaction. Hillsdale, N.J.: L. Erlbaum Associates. | view at Amazon.com

This is a pre-print version | last updated February 5, 2008 | All Publications